Tags

The following represents my individual reflections on the Assessment and Feedback for Learning module as part of the PgCAP.

Starting point

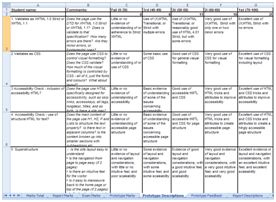

My assessment and feedback practice prior to embarking on this module was absorbed from colleagues, from personal experience, and from the experience of setting, marking, and reflecting on assignments over the last decade. Lave and Wenger (1991) discuss ‘Communities of Practice’ which have a shared and committed domain of interest and a community of members who engage in shared activities and share information, helping and learning from each other. The pooling of expertise of assessors is explored in more detail by Orr (2007), who talks about how marks are constructed by academics in the context of a study of marking and moderation at a University Art department. The Higher Education Academy (HEA) in its 2012 report on assessment, “A Marked Improvement,” emphasised the value of a community of professionals. Early on I embraced the ‘rubric’ style approach based on the Intended Learning Outcomes in the Module Specifications I was handed. An assignment is created to enable students to address and achieve the ILOs. Assessment criteria are set to gauge how well those ILOs have been achieved. (University of Salford 2012) Being an Information Systems scholar I created a spreadsheet, based upon one created before me by another IS colleague, and used, in various forms, by several of us, which includes three worksheets. The rubric of grade descriptors is published online in the Assessment section of Blackboard along with the assignment text itself. The three worksheets are:

- The rubrics – one paragraph for each decile of each of the assessment criteria, (0-10, 11-20, 21-30 etc)

- The individual marks I give to each student against each of the various criteria

- A collection of the specific rubric paragraphs appropriate to each mark for each student, pulled in via a Lookup function

|

|

| 1. The Rubrics | 2. The individual marks for each criteria |

|

|

| 3. Individual paragraphs pulled through via Lookup | 4. Mail-merged Individualised Feedback sheet |

Finally, a ‘free text’ comment field is added alongside the rubric paragraphs for me to add any specific remarks I may have for each student. This is an important feature where I can introduce elements of ‘feed forward’ feedback to help students address issues that may improve their learning for later components of this module, and for the rest of their course. I believe student engagement with feedback can be enhanced if it is carefully planned to ‘feed forward’; i.e. focuses on information about “student work and progress which students may use to inform their future learning” (Bloxham and Boyd, 2007: p234), and not solely applicable to the assignment/component in question. A simple mail-merge from this spreadsheet into a more user-friendly Word document enables me additionally to merge these collections of specific paragraphs into an individualised Feedback sheet with the student’s name and marks as well as the relevant paragraphs from the rubric and the personalised comment field, which can then be converted to PDF and emailed to the student using the Adobe mail-merge function in Outlook.

On many qualitative programmes there is insecurity in awarding a mark just below a boundary division, resulting in “a partial mark exclusion zone” described by Bridges (1999). The compound effect of this exclusion zone when considering a brace of modules within a school or department could significantly impact the number of overall first and upper seconds that are awarded. In order to avoid the class boundary effect it has been my practice to scan through the totals in the spreadsheet for instances where the marks I have given for each criterion add up to a 39 (UG pass at 40), 49 (PG pass at 50), 59 (UG 2i or PG merit), or 69 (UG 1st), etc. I decide whether the student’s achievement should be graded above or below the class boundary, and adjust marks for individual criteria to make the total shift in the desired direction. This element of subjectivity is recognised by the third tenet of the The Higher Education Academy (HEA) in its 2012 report on assessment, “A Marked Improvement”: “Recognising that assessment lacks precision.”

I have been following this process for some years, and have often been praised by External Examiners and students alike for the completeness and clarity of this process. Recently, I have in addition, where classes are small (for PG students in particular) given a printed copy of this Feedback sheet to each student during a five minute one-to-one face-to-face feedback session at the end of the module. The feedback from students, at Staff-Student Committee meetings, however, has reinforced Brown and Glover’s findings, that students, “perceived written feedback as the most useful form of feedback.” (Brown and Glover 2006 p81). Other literature contradicts this, but, as Ferris (2004) realized the broad spectrum of different kinds of feedback depends on many factors (such as teaching contexts and the level of the students), and he acknowledged that there is no “ideal” feedback procedure.

The above process – I have discovered through my reading associated with this module – is an efficient method of putting into a practice the assumptions around the notion of Learning Transfer (Hager & Hodkinson 2009). It has been instructive indeed to question those assumptions, and this has enabled me to take a fresh look at the entire process of assessment and feedback.

The process of Problem Based Learning in the Module

The journey of the module began with the setting up of groups in which to address three PBL scenarios. I refer to my previous post on the nature of PBL.

As regards my own experience of the process of group working on these PBL Scenarios I am sad to report that in my group there was one member who seemed quite reluctant to engage as I would have wished, and this made my experience of groupwork on this module quite poor. Roles and responsibilities amongst the four of us were agreed: that we would each write 200 words towards each of the three scenarios, and that then one of us would pull these contributions together into a document, and at the end one of us would unify the three documents stylistically. I was keen for us to make use of technology enhanced learning, and set up a shared Google Drive space on the first day, for the four of us to use.

While I was unwell, away from campus, and unable to challenge such a group decision, one member of the group was insistent on refusing to make use of technology enhanced learning, and made certain the other members of the group agreed not to use it either. This dogged refusal to engage with any TEL has held back the whole group, and for myself – an Information Systems scholar – has been particularly frustrating. This of course must also at times mirror the experiences of students in groupwork situations, and indeed I have had reports from students in groupwork I have assigned concerning the lack of engagement of one member who holds back the rest. In my opinion this serves as a clear example of why it is best to ensure, when assessing group work, that individual contributions are marked, and that individual marks are not simply derived from the output of group working. This is also the clear outcome of the research undertaken by the Centre for the Study of Higher Education at Melbourne University of Melbourne (2002).

Key points learnt from the PBL Scenarios

The three PBL scenarios presented three different aspects of Assessment and Feedback for our study – marking, feedback, and assessment design. The key points I learnt from each of these were as follows:

- Marking: My practice regarding an ‘exclusion zone’ around class boundaries is common. The range of marks I am prepared to give in what is ultimately quite a qualitative subject area seems to be relatively wide, however, rather than clustered in the middle. I consider my current practice therefore to be worth continuing, although I may in future spend some time talking to them at the beginning about the rubric published on Blackboard, and invite more discussion on its meaning.

- Feedback: Written feedback seems to be supported as of high importance, but the student ultimately is most interested in the grade, and little else. Formative feedback is however also very important, and should be clear, focus on the learning rather than the marks, and be aligned to the assignment criteria. This formative feedback, during the module rather than at the end, is something which – even if it is not immediately valued by the student – is likely to best ensure a good learning experience.

- Assessment design: Groupwork is tricky and must be handled carefully! When attention is paid to ensuring it will work well, however, it can be very fruitful. Nonetheless, groups do not always gel well, and frequently – both in my own experience and as reported by students – it is the stubbornness of one member of the group that spoils it for the rest. Individual assessment is therefore of paramount importance, and peer-assessment should be included to ensure that group members have the opportunity to inform the tutor what has transpired within the group.

Looking back at my former practice

In general, it is clear that my practice in the past was in keeping with sector norms. The fact that I produced good written feedback and distributed it electronically in a clear and readily understandable format, beyond simply giving a mark was a plus-point in my favour (Hepplestone et al 2011).

The fact that my formative feedback was sporadic, and individualised largely to those who sought it rather than formalised to target all students, was a negative point against my former practice. The complete lack of any groupwork, peer or self-assessment in my assignment design was perhaps based in a simple prejudice against groupwork that I hadn’t given much thought to. Problem based learning, however, in its various guises, has been a part of my former practice without my calling it such. An understanding of it can now help me bring it into my future assessment design consciously and intentionally.

Looking to the future

I have already had an opportunity to put this learning into practice in this semester, in my own teaching practice, in the delivery and assessment of a PG module at MediaCity, for which I was module leader, and designed the assignment.

The assessment for the module was divided into two halves, the second half being an exam. The first half I addressed innovatively as a kind of PBL scenario. I chose PBL because it encourages a deeper approach to identifying relevant issues, recognizing individual learning needs, acquiring relevant knowledge, and subsequently applying that knowledge to a given situation (Wilkie, 2000). The task set was as follows: ‘In groups, undertake a Case Study of a member of staff at MediaCity in relation to an external organisation they are working with, and create a digital artefact documenting that case study. Assessment will be based on an individual report detailing your experience of the groupwork, and what you have learnt about partnership models through this process.’

Effective group work in PBL exercises can facilitate the refining of generic key skills sets demanded by today’s employers (Centre for the Study of Higher Education at Melbourne University of Melbourne 2002): teamwork skills (working within a team; leadership), analytical and cognitive skills (analysing task requirements; questioning; critically interpreting source material; evaluating the work of others), collaborative skills (conflict management and resolution; accepting intellectual criticism; flexibility; negotiation and compromise), and organizational / time-management skills. All these skills are paramount for graduates of the Business School and the PBL groupwork approach produced opportunities for the students to address each of them. Feedback from the students suggested that this worked very well. One student in particular included in his summative individual report a paragraph about how instructive the groupwork process had been and that it had made a great impact upon him personally: “This experience has been a very good one for me and I often visit the digital artefact to see what we have created in such a short period of time. I intend to work in more teams in the future professionally and socially so as to enjoy the benefits of different individual resources with the aim of achieving shared goals; increasing my skill set and becoming a better person.” (Umeigbo 2013)

The class consisted of two cohorts, one that had begun the previous semester, the other just beginning. I chose the group membership, to prevent clustering of cohorts into groups. As my main contribution to PBL Scenario Three in this module discusses, I produced a clear guide to group working for my students, encouraging them to allot roles and responsibilities at the outset, to agree strategies, schedule group meetings with everyone’s needs/travel restrictions etc taken into account. Most importantly, I made it clear in this guide that the output of the groupwork would not itself be assessed, but that the individual reports about the groupwork would be the assessed, formal submission for the assignment. The design of this report is intended to reflect, in part, the kind of Performance Development Review individual employees experience in companies and organisations, where their contribution to team working and project work is assessed on an individual level by their manager. This individual report had to include a:

i. Description of the groupwork undertaken

ii. Description of your experience

iii. Report on precisely what you did

iv. A list of the role(s) and responsibilities of the rest of the group and what they did. Include your assessment of others work with feedback. This helps ensure all members work towards the joint project

Formative feedback, helping to guide each student towards achieving the intended learning outcomes, can often be more important than the final grade they achieve, in ensuring a good learning experience, and the inclusion of formative feedback in my practice was begun with the abovementioned groupwork assignment, where I gave formative feedback on their collectively produced (and not assessed) digital artefact, and on their own individual (and assessed) digital portfolios. It is something I intend to incorporate more in future teaching delivery. the Higher Education Academy (HEA) (2012; p9) declared a shift in emphasis from summative to formative assessment to be “the change that has the greatest potential to improve student learning”. The Salford University regulations on Assessment (2012/13 p21) recommend feedback be returned within 15 days but acknowledge that where this summative assessment comes at the end of a module it “would not capture the spirit of assessment and feedback at Salford”, unless it were accompanied by formative feedback earlier in the module. The peer assessment and self-assessment aspects of groupworking, alongside this formative feedback framework, should help produce a more ‘feed-forward’ approach to my future assessment design. (Bloxham and Boyd, 2007: p234).

Conclusion

All in all I believe this module has taught me a good deal – both about what I was already doing, good and bad – and about what I can do to bolster the best of what I was already doing, and fill in the gaps with new and innovative methods of designing assignments and providing formative feedback.

References

Black, P. Wiliam, D. (1998). Assessment and Classroom Learning, Assessment in Education, 5(1), pp7-74.

Bloxham, S. Boyd, P. (2007). Providing effective feedback, In Bloxham, S. Boyd, P. (2007). Developing Effective assessment in Higher Education (pp103-116). Maidenhead: Open University Press.

Bridges (1999) ‘Are we missing the mark?’ Times Higher Education 3/9/1999 http://www.timeshighereducation.co.uk/story.asp?storyCode=147821§ioncode=26

Brown, E & Glover, C (2006) Evaluating Written Feedback, in Innovative Assessment in Higher Education, edited by Cordelia Bryan, Karen Clegg, London: Routledge. pp81

Centre for the Study of Higher Education (CSHE) (2002). Five Practical Guides; Assessing Group work, Australian Universities Teaching Committee. Available online http://www.cshe.unimelb.edu.au/assessinglearning/03/group.html (accessed May 2013).

Hager, P and Hodkinson, P (2009) Moving beyond the metaphor of transfer of learning. British Educational Research Journal 35(4) pp. 619–638

Hepplestone, S. Holden, G. Irwin, B. Parkin, H. J and Thorpe, L. “Using technology to encourage student engagement with feedback: a literature review,” Research in Learning Technology 19(2), 117-127 (2011).

Higher Education Academy, (2012). A Marked Improvement, Transforming assessment in higher education, The Higher Education Academy, http://www.heacademy.ac.uk/assets/documents/assessment/A_Marked_Improvement.pdf Accessed March 2013.

Lave, J. Wenger, E. (1991). Situated Learning. Legitimate peripheral participation, Cambridge: University of Cambridge Press.

Orr, S (2007) Assessment Moderation : constructing the marks and constructing the students in Asssessment and Evaluation Higher Education 32(6)

Umeigbo, P (2013) An International Communication Association based Case study Report Information Architecture and e-Commerce module towards MSc. Information Systems Management in Salford Business School.

University of Salford (2012). University Assessment Handbook, A Guide to Assessment Design, Delivery and Feedback. http://www.hr.salford.ac.uk/cms/resources/uploads/File/UoS%20assessment%20handbook%20201213.pdf Accessed March 2013.

Wilkie, K. (2000). The Nature of Problem-based Learning. In Glen, S. Wilkie, K. (eds) 2000, Problem-based learning in nursing. Macmillan, Basingstoke.